Key Components of Conversational AI Explained

The technology stack behind conversational AI, covering deep learning, transformers, generative AI, NLP, and large language models, and how they power systems that understand language, reason over context, and generate responses.

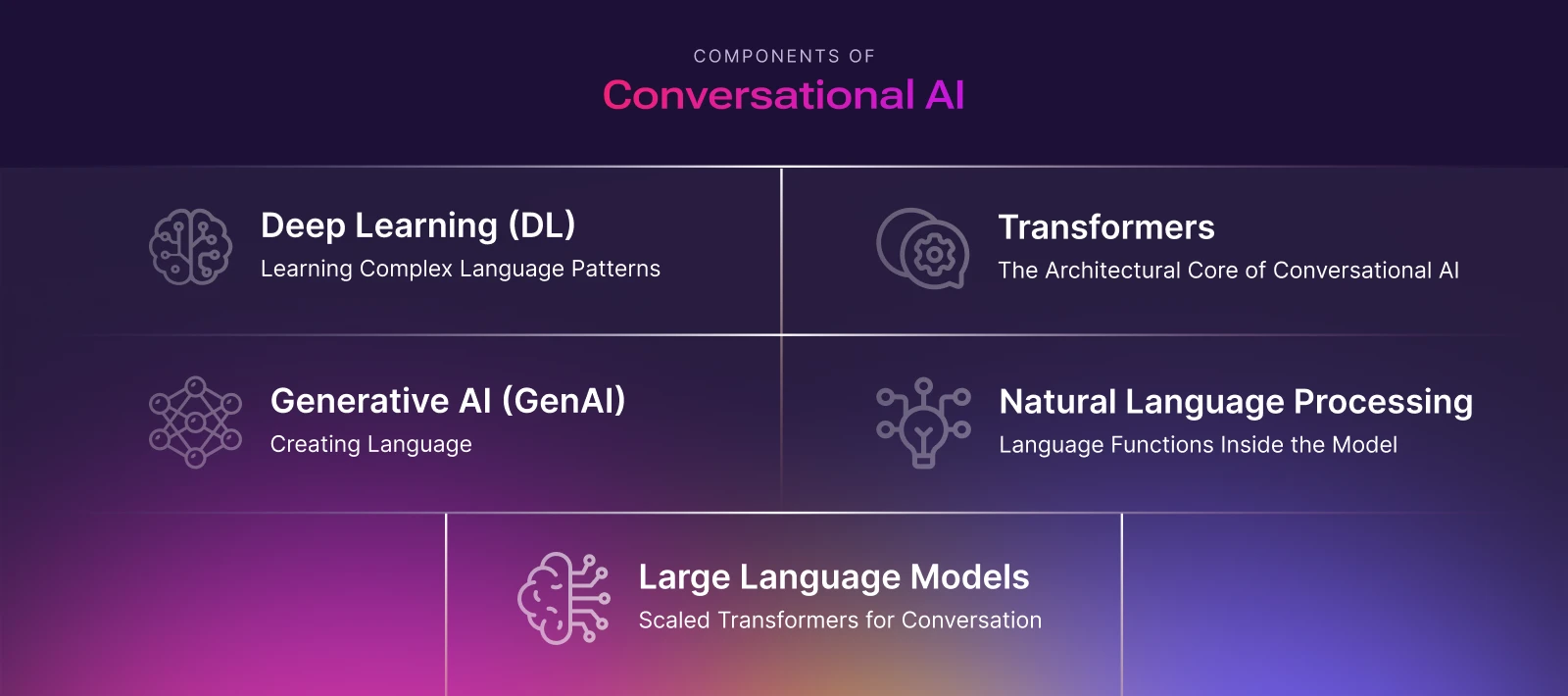

Conversational AI is not a single technology but a stack of interconnected capabilities that work together to understand human language, reason over context, and generate relevant responses. These components relate like nesting dolls: each layer builds on the foundations of the previous one, while sharing a common underlying architecture. Together, they form the intelligence layer of modern conversational systems.

At the core of this stack is deep learning, and more specifically, the transformer architecture, which unifies language understanding, generation, and long-context reasoning across text and speech.

Deep Learning (DL): Learning Complex Language Patterns

Deep Learning (DL) is a subset of machine learning that uses multi-layered neural networks to learn directly from large volumes of data such as text, audio, and images. In conversational AI, deep learning enables systems to move beyond rigid rules and statistical patterns to model the fluid, contextual nature of human language.

Earlier deep learning approaches for language such as recurrent neural networks (RNNs) and Convolutional Neural Networks (CNNs) struggled with long-range context and sequential dependencies. Modern conversational AI overcomes these limitations through transformer-based deep learning, which can process entire sequences in parallel and relate distant parts of a conversation effectively.

As a result, nearly all state-of-the-art conversational capabilities today such as speech recognition (ASR), language understanding and reasoning (LLMs), response generation, and speech synthesis (TTS) are powered by deep learning models built on transformer architectures.

Transformers: The Architectural Core of Conversational AI

Transformers are the core neural network architecture that tie together deep learning, generative AI, NLP, and large language models in modern conversational AI. Rather than processing language step by step, transformers use self-attention to evaluate how every part of an input sequence relates to every other part.

This allows conversational systems to:

- Understand meaning using global context, not just local word order

- Track references across long, multi-turn conversations

- Validate intent and meaning based` on prior dialogue and metadata

Transformers operate on sequences of tokens (words, sub-words, characters, or speech/audio features in ASR and TTS) that are converted into embeddings and refined through multiple stacked attention layers. At higher layers, each token's representation encodes rich semantic, syntactic, and contextual information. This architecture is what enables conversational AI systems to remain coherent, adaptive, and context-aware over time.

Generative AI (GenAI): Creating Language, Not Just Classifying It

Generative AI is a specialized application of deep learning focused on producing new content rather than merely analyzing or classifying existing data. In conversational AI, this means generating natural language responses dynamically instead of selecting from predefined scripts.

Transformer-based generative models learn a probability distribution over sequences that predict the next token based on all previous tokens and context. During a conversation, the model repeatedly generates one token at a time, conditioning each new step on the evolving dialogue history, instructions, and retrieved knowledge.

This mechanism allows conversational systems to:

- Explain concepts in natural language

- Summarize information on the fly

- Adapt tone and detail to each user interaction

Natural Language Processing (NLP): Language Functions Inside the Model

Natural Language Processing (NLP) is the discipline focused on enabling machines to work with human language. Traditionally, NLP systems were composed of separate modules: intent classifiers, entity extractors, sentiment analyzers, and templated generators. These were often built using rules or lightweight statistical models.

In modern conversational AI, these functions are largely unified inside transformer-based models. Rather than treating understanding and generation as separate steps, a single model interprets user input holistically, using conversation history, context, and metadata to infer meaning and produce responses.

Capabilities that once required distinct NLP pipelines such as intent detection, entity extraction, summarization, translation, and question answering, now emerge as behaviors of a shared transformer backbone. NLP no longer sits beside the model; it happens within it.

Large Language Models (LLMs): Scaled Transformers for Conversation

Large Language Models (LLMs) represent the most advanced class of transformer-based generative models. They are large-scale transformers trained on massive text corpora with objectives such as next-token prediction or masked-token reconstruction.

What distinguishes LLMs is not a fundamentally new mechanism, but scale:

- More parameters

- More data

- Longer context windows

At sufficient scale, transformer models exhibit emergent capabilities such as few-shot learning, long-range reasoning, instruction following, and coherent multi-turn dialogue. In conversational AI systems, LLMs act as the central "language brain" which interprets user input, reasoning over prior turns, and generating contextually appropriate responses in real time.

Older chatbots used separate NLU (Natural Language Understanding) systems to detect intent and extract entities. Modern conversational AI integrates this understanding directly into the LLM, which interprets user input using conversation history, metadata, and context.

For instance, if you say, "I'm late and might miss my appointment," the AI understands both the intent to reschedule and the implied urgency. It can respond naturally without requiring explicit instructions for every possible scenario.

In other words, the LLM "reads between the lines" like a human assistant who understands not only the words but also their meaning in context.

Transformers Across the Conversational AI Pipeline

Modern conversational AI systems are often multimodal, especially in voice-based experiences. Transformers play a central role across the entire pipeline, demonstrating how conversational AI works across multimodal systems:

- Automatic Speech Recognition (ASR) (Listening to the user): Transformer encoders model long-range temporal patterns in audio to convert speech into text. This is how the system "hears" what the user says.

- Dialogue and Reasoning (LLMs) (Understanding and deciding): Decoder-style transformers interpret the transcribed text, conversation history, and retrieved knowledge to decide what to say or do next, including calling tools and APIs.

- Text-to-Speech (TTS) (Speaking back to the user): Transformer-based TTS models generate prosody-aware intermediate representations that are turned into natural-sounding speech, allowing the assistant to respond in a consistent voice and style.

By using a shared architectural paradigm, conversational systems can maintain consistency, context, and control across listening (ASR), thinking (LLMs), and speaking (TTS).

Bringing It All Together

The technology used in conversational AI forms a clear hierarchy:

- Deep Learning: Neural networks for complex perception and language

- Transformers: The core architecture for sequence modeling and attention

- Generative AI: Models that create new content

- NLP: Language understanding and generation functions implemented within models

- Large Language Models: Scaled transformer models specialized for language

Together, they drive the intelligence of conversational AI systems. However, the effort to build and deploy conversational AI for production-ready environments in 2026 extends beyond models alone. These systems are integrated with orchestration layers, memory, safety mechanisms, and system controls that allow models to trigger actions, call tools, and operate reliably in real-world environments.

As a result, modern conversational AI is no longer purely reactive. By maintaining context, learning from behavior, and generating responses dynamically, it can anticipate user needs and offer timely assistance. For example, while a user is browsing a website's FAQs, a conversational assistant can proactively suggest relevant answers or shortcuts creating a smoother, more intuitive experience.

Our 24/7 Conversational AI Agents

Banking

Conversational AI in banking that handles account servicing, payments, and lending queries in real time...

Sales

Reduce friction, speed resolution, and scale 24/7 sales experiences. Run full workflows through...

Contact Center

Round the clock support with a human touch. Run full workflows through conversational ai technology...

Marketing

AI-powered conversational AI marketing chatbots and agents to deliver a more personalized customer experience...

Logistics

Streamlines logistics and supply chain operations with real time tracking, cost savings, multilingual support, and...

Education

Personalized learning, faster outcomes, higher engagement, scalable support, and measurable academic performance gains.

Finance

Improves efficiency, reduces costs, enhances CX, ensures compliance, and scales personalized...

Government

Transform government services through automation, improve efficiency, accessibility, citizen satisfaction, and...

BPO

Boosts BPO efficiency, scalability, and CX while reducing costs, improving resolution rates, and enhancing agent productivity.

Manufacturing

Boosts OEE, reduces MTTR, automates workflows, enhances productivity, and delivers real-time insights across...

Games

Drives retention, immersion, automation, and monetization through scalable, real-time, personalized player interactions...

Airlines

Reduces costs, accelerates support, boosts satisfaction, enables 24/7 multilingual service, along with...

Plumbers

Helps capture leads, automate bookings, reduce no-shows, improve response times, and increase overall efficiency.

Media

Boosts engagement, drives revenue, automates workflows, and delivers personalized, scalable audience...

Dealerships

Turn calls into booked appointments and qualified leads - delivering higher coverage, less workload, and...

Insurance

Conversational AI in insurance automates claims intake, personalizes policy support, and...

E-commerce

Automate order status, returns, and FAQs with natural-sounding AI voice agents. Faster answers...

Customer Support

Conversational AI for customer support handles queries across chat, voice, and messaging - 24/7, in natural language...

Telecom

Answer every call instantly, resolve billing and network issues, cut handle time, and turn interactions into advantages

Hotels

Boosts bookings, guest satisfaction, efficiency, personalization, multilingual support, and operational performance...

HR & Recruiting

Answer PTO and leave, benefits and payroll questions, policy FAQs, onboarding check-ins, employee...