Future of Conversational AI: Key Trends to Watch

Examines the future of conversational AI in 2026, highlighting trends such as workflow-oriented architectures, stronger orchestration, reliable voice interactions, retrieval-based grounding, and observability that enable scalable, production-grade systems embedded within real business operations.

Conversational AI in 2026 is no longer about shipping impressive demos or standalone chatbots. The direction is clear: the industry is moving toward production-grade conversational systems that are reliable, observable, and deeply integrated into real business workflows. Model quality still matters, but it is no longer the primary differentiator. What separates effective systems from fragile ones is how they are orchestrated, tested, grounded, and operated over time.

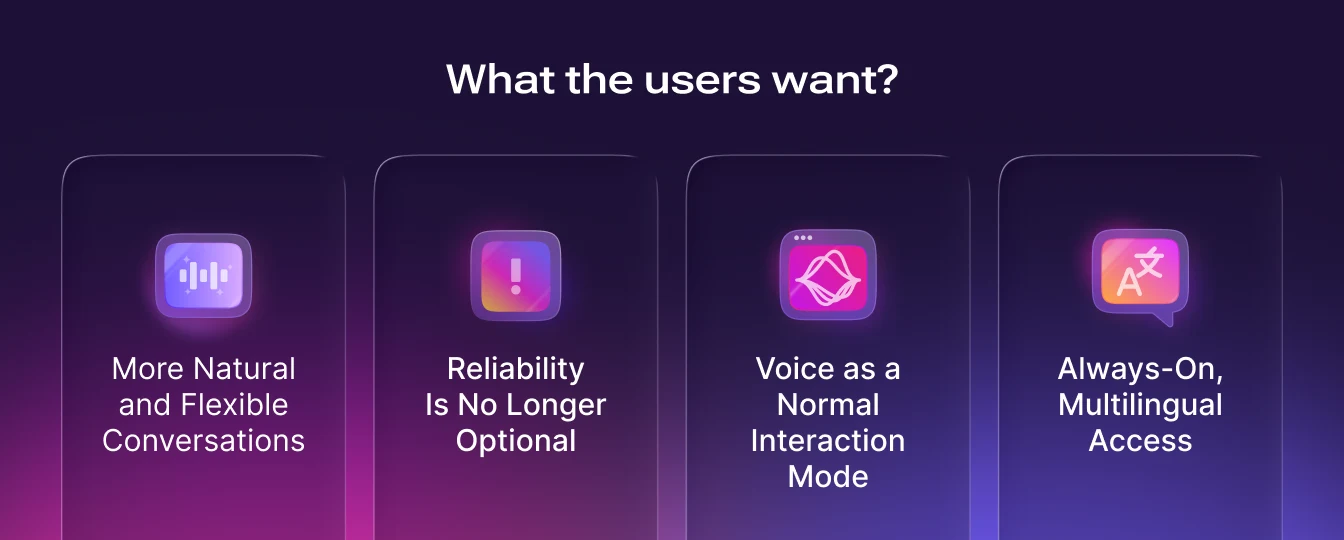

This shift becomes clearer when viewed through two lenses: what users expect from conversational AI, and what builders must do to meet those expectations.

Conversational AI in 2026: User Point of View

More Natural and Flexible Conversations

From the user’s perspective, conversational AI is becoming less rigid and more forgiving. Instead of navigating static FAQ trees or narrowly defined intents, users can ask complex, multi-step, and loosely phrased questions and still receive useful responses. This enables experiences such as AI tutors and coaches, health report explainers, and product assistants embedded directly into websites, messaging apps like WhatsApp, or internal enterprise tools showcasing expanding applications of conversational AI across industries.

In many cases, the conversational interface itself becomes the primary product, especially in education, coaching, and guided self-service scenarios.

Reliability Is No Longer Optional

As conversational AI becomes more common, user tolerance for failure decreases. Users now expect fewer dead ends, consistent behavior when policies are involved, and clear escalation to a human when issues become complex or high-risk. An AI that sounds natural but behaves unpredictably quickly loses credibility. In 2026, reliability is not a differentiator, it is a baseline expectation.

Voice as a Normal Interaction Mode

Voice interactions are increasingly accepted in everyday workflows. Users are comfortable with voice agents handling outbound reminders, lead outreach, and simple inbound requests, provided the interaction feels responsive and respectful of conversational norms. Latency, interruptibility (barge-in), and speech quality matter as much as correctness. Poor voice performance breaks trust faster than text-based errors.

Always-On, Multilingual Access

Users also expect conversational AI to be available around the clock and in multiple languages. This is particularly transformative for SMBs and global organizations that previously could not afford 24/7, multilingual support. Conversational AI is becoming a core access layer for global communication rather than a premium feature.

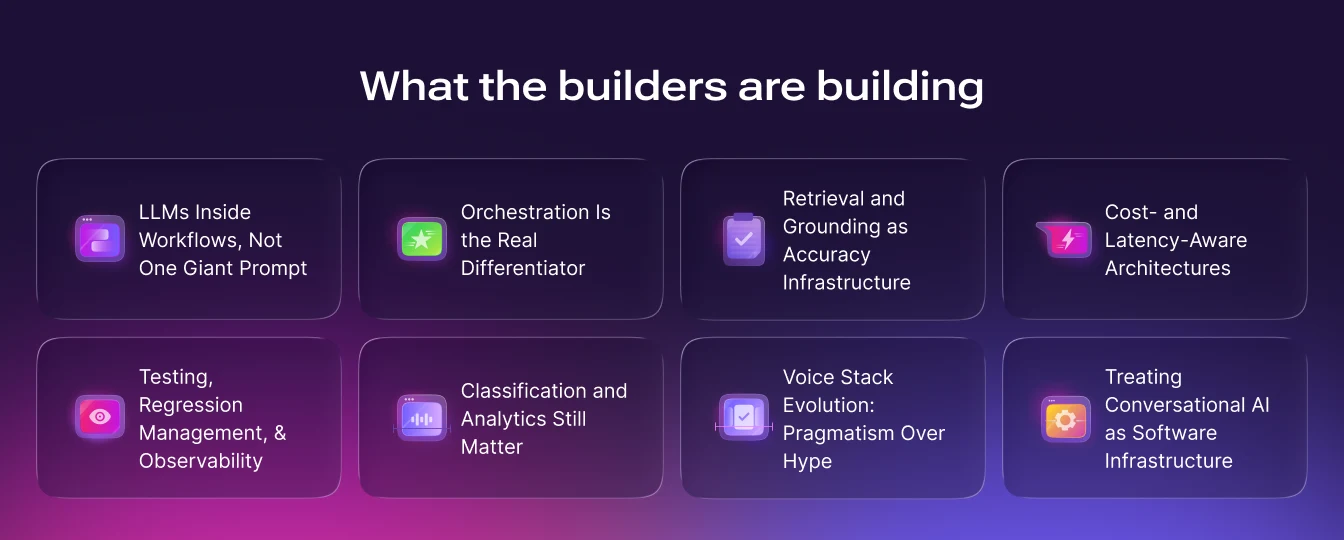

Conversational AI in 2026: Builder Point of View

LLMs Inside Workflows, Not One Giant Prompt

A key lesson emerging in 2026 is what not to do: builders should avoid shipping a single, massive “god prompt” that attempts to handle every scenario. These systems are fragile, difficult to debug, and impossible to measure effectively. Small changes can introduce unpredictable side effects, and failures are hard to localize.

Instead, builders are moving toward explicit workflows or state machines, where the conversation is broken into structured steps such as identifying user intent, authenticating, retrieving data, proposing options, and confirming actions. Within each step, the LLM performs a bounded task, such as selecting the next state or generating a response using specific tools. This approach trades maximal cleverness for predictability, control, and debuggability.

Orchestration Is the Real Differentiator

By 2026, access to strong language models is largely commoditized. Choosing one frontier model over another is rarely a lasting competitive advantage. What truly differentiates systems is the orchestration layer around the model.

This includes how state is managed across turns, how tools are invoked, how fallbacks and escalation are handled, and how policies are enforced. Effective orchestration allows teams to reason about system behavior, measure outcomes, and ensure compliance even as models evolve.

Retrieval and Grounding as Accuracy Infrastructure

As conversational AI systems handle policy-driven and high-stakes interactions, retrieval quality becomes critical. Builders must design knowledge systems that go beyond simple vector search. This includes section-aware indexing, curated Q&A for sensitive policies, and deliberate grounding strategies to ensure responses remain accurate and auditable.

Without strong retrieval design, flexible LLM responses increase the risk of hallucinations and inconsistent policy enforcement, especially in domains like healthcare, refunds, and compliance-heavy workflows.

Cost- and Latency-Aware Architectures

Production conversational AI systems must actively manage cost and performance. Builders increasingly route simple queries to lightweight models while reserving stronger models for complex reasoning. Techniques such as caching, summarization, and context truncation are essential to maintain responsiveness and control operating costs.

These architectural choices are as important as model selection when deploying conversational AI at scale.

Testing, Regression Management, and Observability

Non-determinism is an inherent property of LLM-based systems. A change intended to fix one issue can silently break others. As a result, builders are investing early in scenario-based regression testing, where fixed conversational scenarios representing real user goals are re-run after every system change.

Beyond testing, observability has become a first-class concern. Teams monitor resolution rates, escalation frequency, drop-offs, safety signals, and customer satisfaction in real time. Judge models and rule-based checks are used to score task success, tone, and policy adherence. Optimization is driven by evidence, not intuition.

Classification and Analytics Still Matter

A common misconception is that LLMs eliminate the need for classification. In reality, builders still need to bucket conversations by type, outcome, and risk level to understand where systems fail and where to invest improvement effort. Without segmentation, averages hide critical weaknesses. Classification enables targeted fixes, better prioritization, and measurable progress.

Voice Stack Evolution: Pragmatism Over Hype

In 2026, most production voice systems rely on cascaded architectures that combine speech recognition, language models, and text-to-speech. This approach remains preferred for accuracy, control, and debuggability, particularly in bookings, payments, and compliance-heavy workflows. End-to-end speech models are promising, but they are harder to debug and less predictable. They are more likely to succeed first in unconstrained, experience-first scenarios such as coaching or outbound engagement.

Treating Conversational AI as Software Infrastructure

Organizations looking to build and deploy conversational AI increasingly structure it as a core software system rather than a one-off project. Business operations teams define SOPs, policies, and escalation rules. Engineering and AI teams own orchestration, integrations, testing, and observability. Conversation designers focus on tone, trust, and sensitive flows. This clear ownership enables repeatable deployments and reduces dependence on heavy professional services.

A common pattern among successful teams is to start narrow, deploy to a constrained use case, iterate through transcript review, and expand coverage deliberately. The goal is predictable outcomes and stable performance, not flashy demos that fail in production.

Why Use Murf’s AI Voice Agent?

As conversational AI systems mature into orchestrated, voice-enabled infrastructure, the underlying voice layer must meet production-grade requirements. Murf’s AI Voice Agent is designed for this reality.

Core Performance

- Ultra-low latency powered by in-house TTS models like Falcon, delivering total response latency of approximately 900 ms for natural, interruptible conversations.

- Lifelike conversational speech with 99.38% pronunciation accuracy across more than 150 voices.

- Seamless multilingual support across 35+ languages and accents.

Reliability and Control

- Built for cascaded voice architectures that prioritize accuracy and debuggability.

- Smooth warm handoffs to human agents for complex or high-risk interactions.

- Full control over dialing schedules, retry logic, voice selection, and scripts.

- Enterprise-grade security with secure cloud or on-prem deployment, encrypted data handling, and SOC 2 and GDPR compliance.

Murf’s AI Voice Agent aligns with the direction conversational AI is taking in 2026: reliable, orchestrated, multilingual systems that feel natural to users while being engineered with the rigor of serious backend infrastructure.

Conversational AI is transforming how businesses communicate by enabling systems to process human language, generate human language naturally, and deliver appropriate responses across every customer interaction. These systems can answer frequently asked questions, handle complex customer inquiries, and answer questions with greater accuracy while automating routine tasks and improving operational efficiency.

Our 24/7 Conversational AI Agents

Banking

Conversational AI in banking that handles account servicing, payments, and lending queries in real time...

Sales

Reduce friction, speed resolution, and scale 24/7 sales experiences. Run full workflows through...

Contact Center

Round the clock support with a human touch. Run full workflows through conversational ai technology...

Marketing

AI-powered conversational AI marketing chatbots and agents to deliver a more personalized customer experience...

Logistics

Streamlines logistics and supply chain operations with real time tracking, cost savings, multilingual support, and...

Education

Personalized learning, faster outcomes, higher engagement, scalable support, and measurable academic performance gains.

Finance

Improves efficiency, reduces costs, enhances CX, ensures compliance, and scales personalized...

Government

Transform government services through automation, improve efficiency, accessibility, citizen satisfaction, and...

BPO

Boosts BPO efficiency, scalability, and CX while reducing costs, improving resolution rates, and enhancing agent productivity.

Manufacturing

Boosts OEE, reduces MTTR, automates workflows, enhances productivity, and delivers real-time insights across...

Games

Drives retention, immersion, automation, and monetization through scalable, real-time, personalized player interactions...

Airlines

Reduces costs, accelerates support, boosts satisfaction, enables 24/7 multilingual service, along with...

Plumbers

Helps capture leads, automate bookings, reduce no-shows, improve response times, and increase overall efficiency.

Media

Boosts engagement, drives revenue, automates workflows, and delivers personalized, scalable audience...

Dealerships

Turn calls into booked appointments and qualified leads - delivering higher coverage, less workload, and...

Insurance

Conversational AI in insurance automates claims intake, personalizes policy support, and...

E-commerce

Automate order status, returns, and FAQs with natural-sounding AI voice agents. Faster answers...

Customer Support

Conversational AI for customer support handles queries across chat, voice, and messaging - 24/7, in natural language...

Telecom

Answer every call instantly, resolve billing and network issues, cut handle time, and turn interactions into advantages

Hotels

Boosts bookings, guest satisfaction, efficiency, personalization, multilingual support, and operational performance...

HR & Recruiting

Answer PTO and leave, benefits and payroll questions, policy FAQs, onboarding check-ins, employee...