AI Glossary

Browse our AI glossary for clear definitions of artificial intelligence, machine learning, and large language model terms, complete with use cases and examples to understand each concept in practice.

What Is an RNN?

A recurrent neural network (RNN) is a type of neural network designed to process sequences of data by remembering what came before. Unlike regular models, an RNN uses past information to influence current predictions.

Many people ask what is RNN and how it works. In simple terms, an RNN reads data step by step (like words in a sentence or frames in audio) and keeps track of previous inputs to understand context.

This makes recurrent neural networks especially useful for tasks where order matters, such as language, speech, and time-based data, particularly in artificial intelligence (AI) systems.

How Does an RNN Work?

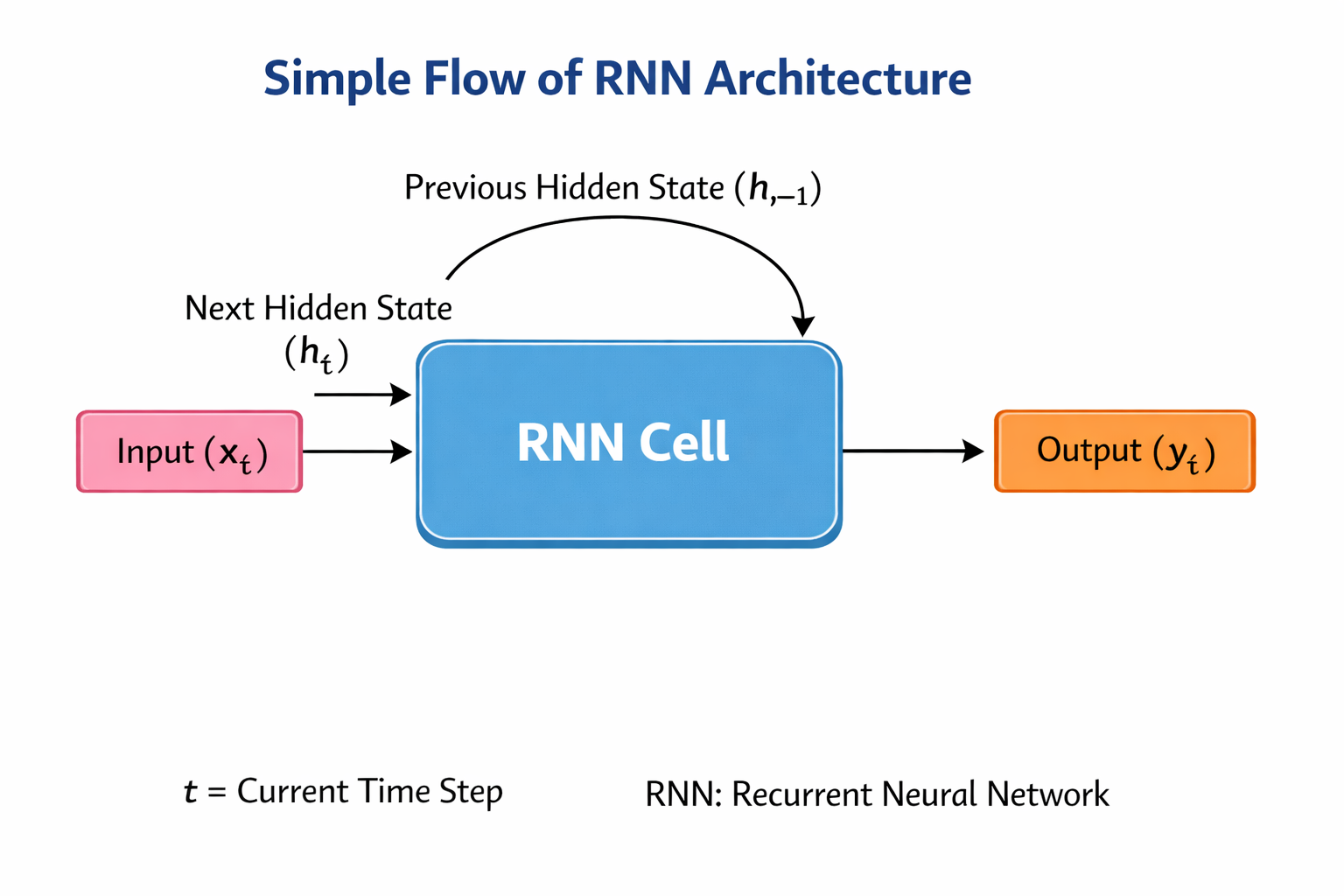

To understand how an RNN model works, think of it as a loop that carries information forward through an RNN cell.

At each step:

- The RNN cell receives an input (like a word or sound)

- It combines this input with the previous hidden state (its memory)

- It updates the hidden state to capture new information

- It produces an output during inference

Simple Flow of RNN Architecture

This looping structure is called RNN architecture, and it allows the model to learn patterns over time.

Unlike traditional models that treat each input independently, RNNs connect past and present data, which helps them understand sequences better using machine learning (ML) techniques.

Common Variants of the RNN Model

Basic RNN neural networks can struggle to learn relationships across very long sequences. Training them on lengthy inputs can cause a problem known as the vanishing gradient, where the signal used to teach the network fades before it reaches earlier steps. Practitioners generally use gated variants to manage this.

Two widely used gated variants are:

- LSTM (Long Short-Term Memory): An RNN variant designed to retain useful information over longer stretches of a sequence while discarding what is no longer relevant.

- GRU (Gated Recurrent Unit): A streamlined alternative to LSTM that uses a similar gating approach but with a simpler structure.

Both LSTM and GRU are considered types of recurrent neural networks and appear across voice, language, and audio applications.

Why RNNs Matter in AI

RNNs play an important role in AI systems that need to understand sequences.

They help systems:

- Understand language flow in sentences

- Process time-based signals like speech

- Predict future values based on past data

- Maintain context across inputs

Because of this, RNNs are widely used in natural language processing (NLP) and speech to text (STT) systems. They also support tasks handled by large language models (LLMs), although modern systems often use more advanced architectures like transformers.

Applications of Recurrent Neural Networks

RNNs are used in many real-world AI systems where data comes in sequences.

1. Speech Recognition

RNNs are used in speech recognition systems to process audio over time. They help models understand how sounds form words and sentences.

2. Text Generation

RNNs can generate text by predicting the next word based on previous words. This is useful in chatbots and tools powered by generative AI.

3. Machine Translation

RNN models help translate languages by understanding sentence structure and context using natural language understanding (NLU).

4. Time-Series Prediction

In finance or weather forecasting, RNNs analyze past data to predict future outcomes using probabilistic methods.

5. Voice AI Systems

In voice workflows, RNNs help process sequential audio data. Combined with technologies like text to speech (TTS) and vocoder, they contribute to natural voice generation pipelines.

On voice platforms like Murf, these sequence-based models govern how audio is processed and structured before the final output.

Examples of RNN in Action

Here are simple, real-world examples of how RNNs work, especially in voice and language systems:

1. Typing assistants

RNN-based language models power predictive text by analysing the sequence of words you’ve already typed. They use past context to suggest the next word, making typing faster and more accurate.

2. Voice assistants

When you speak to a voice assistant, the system processes audio over time using speech to text. RNNs help interpret the sequence of sounds, ensuring the system understands your full command instead of isolated words.

3. Text-to-speech (TTS) systems

In text to speech pipelines, RNNs help model how speech flows over time. They contribute to generating natural-sounding rhythm, pauses, and intonation by understanding sequence patterns in language and audio.

4. Music and audio generation

RNNs are widely used to generate sequences like music or speech waveforms. They predict the next note or sound based on previous ones, creating smooth and coherent audio outputs.

5. Chat and conversational AI systems

In conversational AI, RNNs help maintain context across multiple turns. This allows systems to respond more naturally instead of treating every message as a completely new input.

Limitations of RNNs

Although RNNs are powerful, they have some challenges:

- Difficulty remembering very long sequences

- Slower training compared to newer models

- Vanishing gradient problem (loss of information over time)

Because of this, newer models like transformers are often preferred for large-scale tasks in artificial intelligence (AI).

Future of RNNs

Even though newer architectures exist, RNNs are still useful for many applications. They remain relevant in lightweight AI systems, real-time processing tasks, and voice and audio pipelines. As AI evolves, RNNs continue to support systems that require sequential understanding, especially in speech and time-based data, often working alongside deep learning (DL) and natural language processing (NLP).