Speech-to-Speech vs STT-LLM-TTS: Which architecture should you build your voice agent on?

.webp)

Every product leader building voice AI has had the same moment. You watch a demo of a speech-to-speech model. It listens, it responds with almost zero lag and sounds human. And you think: why am I still stitching together an STT-LLM-TTS pipeline?

Speech-to-speech models have made real progress on latency, prosody, and conversational naturalness. But a demo is not the same as deployment. The architecture you choose is one of the most consequential decisions you'll make, and getting it wrong is expensive to reverse.

This article breaks down both architectures across the dimensions that actually determine whether a voice AI product survives production: latency, cost at scale, voice quality and variety, control and debuggability, and compliance. Not to declare a winner, but to give you the framework to make the right call for what you're building.

How Each Architecture Works?

The STT-LLM-TTS pipeline is sequential. An ASR model transcribes the user's audio into text, an LLM generates a response, and a TTS engine converts that response into speech. Modern implementations stream partial transcripts to the LLM before the user finishes speaking, and overlap synthesis with generation to reduce latency.

Speech-to-speech models collapse this into a single model. Speech representations go in, speech representations come out. Without a text intermediary, the model preserves paralinguistic signals, tone, pacing, hesitation. The latency gains are real, but so are the trade-offs.

Where speech-to-speech genuinely wins

Latency

Both architectures are equally subject to network latency in cloud deployments. The difference is in orchestration. A well-optimised STT-LLM-TTS pipeline reduces perceived latency by streaming partial transcripts to the LLM before the user finishes speaking and beginning synthesis before the full response is generated. But the hand-offs between components still add coordination overhead.

Speech-to-speech models reduce this by operating over continuous audio representations with no text bottleneck in between. The advantage is less about eliminating delay and more about faster reaction times and smoother turn-taking, particularly in rapid back-and-forth conversations.

Prosody Preservation

When speech is reduced to a plain transcript, much of the non-lexical signal - hesitation, pacing, emphasis, emotional tone, timing is compressed or lost unless explicitly modeled and passed downstream. A text-first LLM can only reason over the signals it receives, which means expressive nuance often has to be reconstructed during TTS. Speech-to-speech systems retain richer speech representations throughout the loop, making it easier to preserve tone, pacing, and conversational intent rather than approximating them.

Interruption Handling

Speech-native, full-duplex systems can listen and generate simultaneously, which enables more natural barge-in and mid-utterance adaptation. They are less reliant on a fully stabilized transcript and can adjust responses in a continuous loop.

Cascaded STT → LLM → TTS pipelines can also support interruptions, but this requires tighter coordination across VAD, partial transcripts, generation control, and TTS cancellation. The added orchestration can make interruptions feel more structured and slightly less fluid than in tightly integrated speech-native systems.

For consumer-facing, conversational applications where naturalness is the primary metric, speech-to-speech is a compelling choice. The challenge is that most enterprise voice AI deployments are not optimising for naturalness alone, and that is where the differences become more apparent.

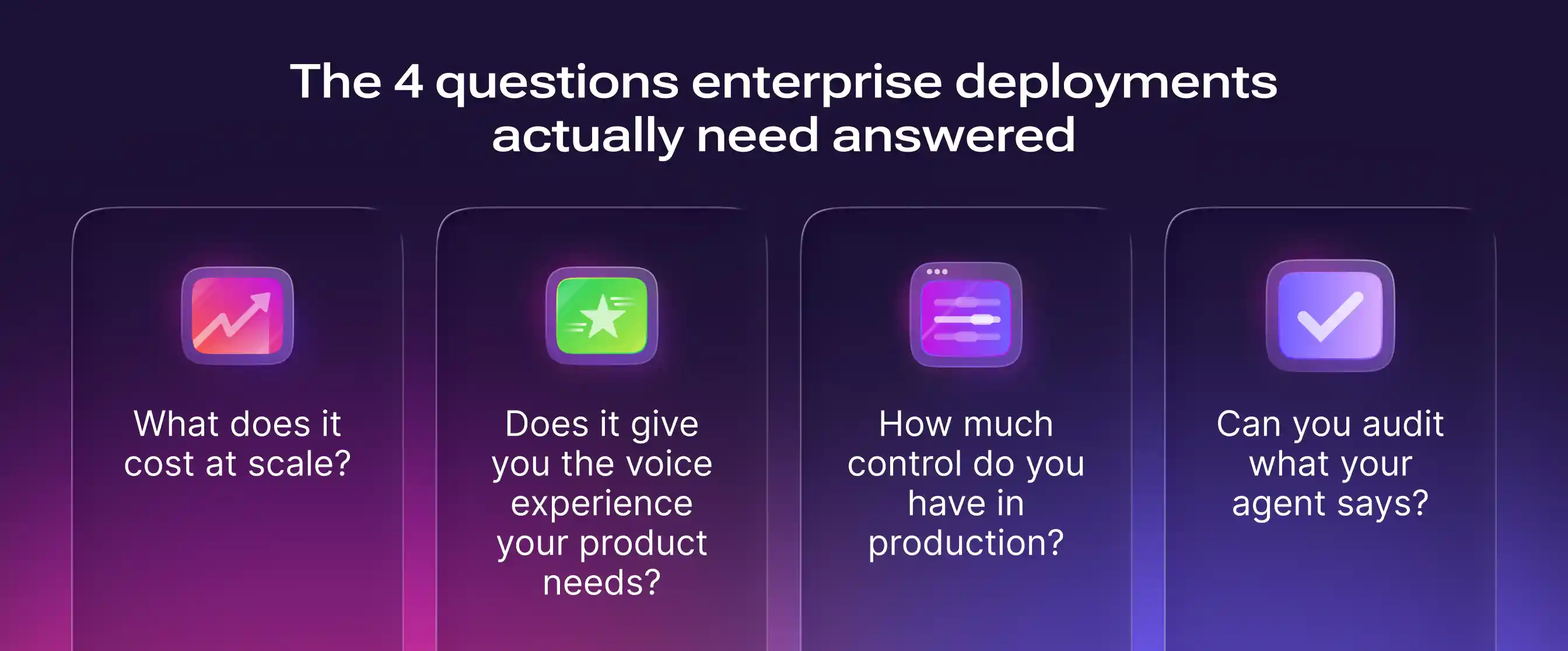

The four questions enterprise deployments actually need answered

When a voice AI product moves from pilot to production, the evaluation criteria shift. Naturalness matters, but it sits alongside four questions that tend to determine whether a deployment succeeds at scale or stalls.

Q1: What does it cost at scale?

Speech-to-speech systems bundle transcription, reasoning, and synthesis into a single API, which simplifies integration but can make cost scale directly with both input and output audio duration.

Here is what the breakdown looks like, assuming each call lasts 5 minutes (3 minutes of input audio and 2 minutes of output). The models used in this breakdown are listed alongside.

Note: Assumes moderate token usage per call. Actual costs vary by deployment, scale, and hosting architecture. Telephony and platform fees are excluded.

At 100 calls a day, the difference is roughly $21,000 a year.

The number of calls here is a very conservative estimate and could run to millions of calls for fully functioning contact centers.

Even small per-call differences translate into significant annual spend in high-volume deployments.

There is also a predictability problem with S2S pricing. Cost scales with both input and output audio duration, and longer system prompts or more complex multi-turn conversations can increase the underlying reasoning load, pushing per-call cost above the baseline depending on how the provider meters usage. In a pipeline, each component is billed independently with clear unit economics. You can optimise at each layer: use a lighter LLM for simpler queries, a more cost-efficient ASR model for high-volume transcription, or switch your TTS provider without touching the rest of the stack.

It is worth noting that S2S pricing will likely come down as the technology matures. But the pipeline's cost advantage is structural, not just a function of today's pricing. Even as S2S gets cheaper, the ability to optimise each component independently will continue to give the pipeline an edge for high-volume deployments.

Q2: Does it give you the voice experience your product needs?

Voice is a brand touchpoint. The voice your agent uses shapes how users perceive your product, and for enterprise deployments serving customers across markets and languages, that choice needs to be deliberate.

The comparison below is between S2S as a complete system and just the TTS layer of the pipeline, which is one independently swappable component.

The gap is significant. For a single-language consumer product where brand voice is not a priority, 10 voices may be sufficient. For any enterprise deployment serving multiple markets, regulated industries, or a brand that invests in how it sounds, the pipeline's flexibility is a requirement.

Q3. How much control do you have in production?

As voice systems move from pilot to production, control becomes a primary consideration. Speech-to-speech systems operate as tightly integrated loops where speech understanding, reasoning, and response generation are coupled. This enables fluid interactions but reduces the points of intervention. When something goes wrong, it is harder to identify whether the issue came from understanding, logic, or synthesis because the layers are not independently visible.

Pipeline architectures expose each stage. Transcripts, prompts, tool calls, and outputs are observable, which makes debugging, iteration, and behaviour tuning more straightforward. Product teams can adjust specific components without redesigning the entire system. Controllability in S2S systems is improving with better streaming events and interfaces, but the control surface is still narrower than modular pipelines. At a small scale, this gap is manageable. At production scale, it becomes operationally significant because control determines how reliably the system can be monitored, tuned, and maintained over time.

Q4. Can you audit what your agent says?

In regulated industries, compliance isn't just about where data lives. It's about what your agent is allowed to say, and whether you can prove it said the right thing.

S2S systems can support compliance controls, but doing so typically requires additional layers. Teams may run parallel transcription, apply real-time audio scanning, or encode guardrails in system prompts. All of this is possible, but it adds latency, complexity, and relies on probabilistic enforcement.

Pipeline architectures provide a clearer control point at the text layer before synthesis. Content can be filtered, rewritten, or blocked before it is ever spoken, which makes compliance enforcement more direct and auditable.

For regulated environments, this distinction is operationally important. Post-output detection and prompt-based prevention may be sufficient for some use cases, but stricter compliance frameworks often require pre-output control and traceability. Achieving that level of assurance on top of S2S is feasible, but it typically involves additional engineering and parallel control mechanisms.

So which architecture should you build on?

The choice ultimately depends on what your voice AI is expected to do in production.

Many voice AI systems are conversational, but not all conversational systems operate under the same constraints. Some are open-ended and experience-led, where the primary goal is fluid interaction and user engagement. In these settings, variability is acceptable, integration requirements are minimal, operational complexity is lower, and error tolerance is high. S2S architectures are a fit here. They excel at preserving prosody, reducing latency, and producing interactions that feel genuinely human. This holds where the cost can be justified: high-end consumer apps like AI coaching tools or language tutoring platforms charge premium subscriptions for extended voice interactions and can absorb the higher per-minute cost.

Enterprise voice systems are also conversational, but they are task-driven and operational. They handle high-stakes customer support workflows, financial transactions, healthcare intake, scheduling, and other structured processes. These systems must integrate with backend tools, enforce compliance before output, remain observable and debuggable, and operate under cost constraints at scale.

The architectural decision therefore is not simply about naturalness. It is about operational fit. That said, modular pipelines are not inherently robotic, careful tuning of TTS prosody, low-latency streaming, and thoughtful turn-taking logic can close much of the naturalness gap in practice. The ceiling is lower than S2S, but for most enterprise use cases, it is high enough. And the trade is worth it: if the product must meet enterprise-grade requirements around compliance, reliability, observability, and cost control at scale, modular pipelines remain the more pragmatic choice today.

Where does this go from here?

S2S technology is advancing rapidly and the gap between what it can do in a demo and what it can do in production is closing. There will be a point where S2S is a credible choice for a much broader range of enterprise deployments.

Today, most enterprise voice systems still favour STT–LLM–TTS pipelines. Not because it is more exciting, but because it is more controllable, more debuggable, more cost-efficient at scale, and more compatible with the compliance requirements that regulated industries cannot work around. The modularity that makes it feel less elegant is exactly what makes it production-ready.

.webp)

.webp)

.webp)